F1 Score Calculation

F1 Score of the confusion matrix is the combination of precision and recall, serving as a comprehensive metric for the segmentation performance. The recall represents how the neural network matches well the area of the labeled defect region and the precision represents how the neural network refrains well from being confused by other areas in an image. To get F1 Score of confusion matrix, the precision and recall must be calculated beforehand. Note that the precision, recall, and F1 score are calculated differently compared to those in Region Area Metrics, calculated in pixel level.

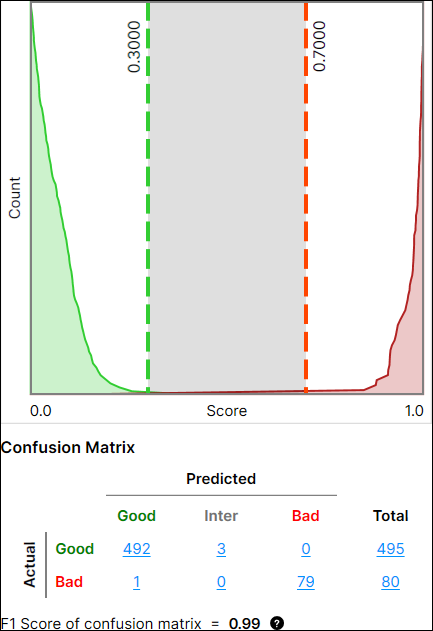

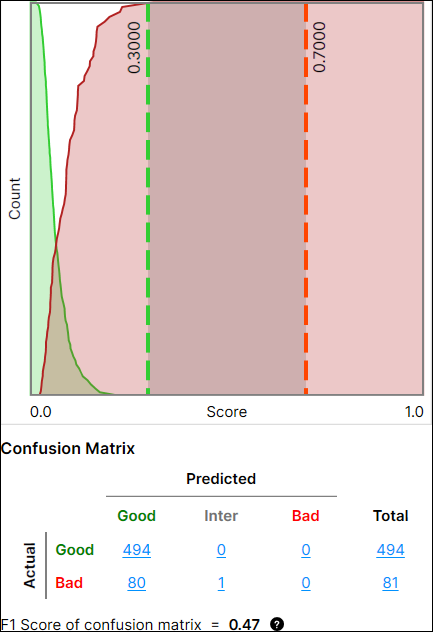

F1 Score of confusion matrix changes per the Count dropdown option. It is interpreted as the harmonic mean between the precision and recall of the current confusion matrix. If a view or a region is predicted as "Inter," it is counted as "Bad" for the calculation of precision, recall, and F1 Score.

-

(Actual Good , Predicted Inter) → (Actual Good, Predicted Bad)

-

(Actual Bad, Predicted Inter) → (Actual Bad, Predicted Bad)

Before introducing calculation methodology, here are the things that should be known for the calculation:

For predicting "Good" class:

-

1 "Actual Good - Predicted Good" pair stands for 1 "True Positive (TP)"

-

1 "Actual Bad - Predicted Good" pair stands for 1 "False Positive (FP)"

-

1 "Actual Bad - Predicted Bad" pair stands for 1 "True Negative (TN)"

-

1 "Actual Good - Predicted Bad" pair stands for 1 "False Negative (FN)"

For predicting "Bad" class:

-

1 "Actual Bad - Predicted Bad" pair stands for 1 "True Positive (TP)"

-

1 "Actual Good - Predicted Bad" pair stands for 1 "False Positive (FP)"

-

1 "Actual Good - Predicted Good" pair stands for 1 "True Negative (TN)"

-

1 "Actual Bad - Predicted Good" pair stands for 1 "False Negative (FN)"

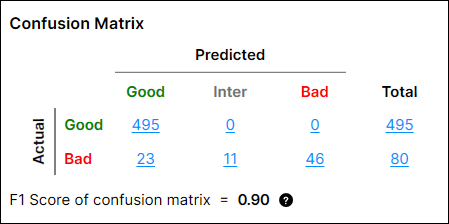

From this setting, the calculation of the precision, recall, and F1 Score of confusion matrix for "Good" Class are done as below:

-

Precision = TP / (TP + FP) = 495/(495+23) = 0.956

-

Recall = TP / (TP + FN) = 495/(495+0) = 1

-

F-score = 2 * Recall * Precision / (Recall+Precision) = 2 * 0.956 * 1 / 1.956 = 0.978

The precision, recall, and F1 Score of confusion matrix for "Bad" Class is done as below:

-

Precision = TP/(TP+FP) = 57/(57+0) = 1

-

Recall = TP/(TP+FN) = 57/(57+23) = 0.713

-

F-score = 2 * Recall * Precision / (Recall + Precision) = 2 * 1 * 0.713 / 1.713= 0.832

Then, the F1 score of confusion matrix is:

-

0.5 * (F-score of Good class) + 0.5 * (F-score of Bad class) = 0.5 * 0.978 + 0.5 * 0.832 = 0.90

|

|

|

|

Good Performance |

Poor Performance |